Bert Weckhuysen and two younger scientists reflect on recognition and rewards

‘Utrecht University abandons the impact factor as a benchmark for recruitment and selection or promotion.’ This could be read on the Nature website last summer. Numerous positive reactions follow worldwide. However, there is also criticism. In an open letter on ScienceGuide, Dutch academics (mostly professors) warn that the new recognition and rewards system is damaging. In response, younger scientists argue the contrary: they very much welcome the changes. What does this mean? Are generations diametrically opposed?

In the group of Bert Weckhuysen, Professor of Inorganic Chemistry and Catalysis and Distinguished University Professor of Catalysis, Energy & Sustainability, the new recognition and rewards system is regularly subject for discussion. What is a fair way to assess academics? And what do the changes mean for (individual) scientists? Talking to Weckhuysen and (young) assistant professors Eline Hutter and Freddy Rabouw shows that these are complex issues in need of nuance. According to Weckhuysen, one thing is clear: the situation is not black and white.

Old versus young

Expressing quality purely based on scientific publications is not representative of the range of tasks of the modern academic, young academics state on ScienceGuide. They say: ‘Young academics are eagerly awaiting a new system of recognition and rewards’. On the same platform, older colleagues predict that the new system will in fact lead to arbitrary results and a loss of quality. However, the thought that it might be a widespread matter of ‘old versus young’ is quickly dismissed by Bert, Eline and Freddy.

Eline: The message of the young academics on ScienceGuide does not correspond to what I hear in my environment.

Freddy:

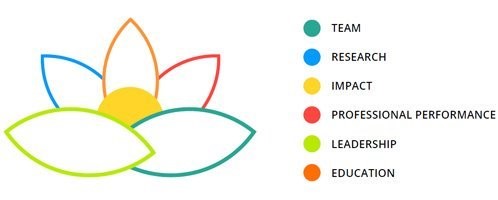

From many of our peers we hear that they experience insecurity about what standard they need to meet in the new assessment system. The Faculty of Science now has a criteria system (TRIPLE) in which you are assessed based on: team, research, impact, professional performance, leadership and education. This gives the impression that being good at research and education is far from sufficient. In addition, the awards being handed out by NWO are now largely in areas, such as team science and communications. So are these the most important things now? Does it mean I would be better off focusing on these aspects rather than on research?

Eline: If excellence in education and research are valued equally from now on, that is of course very good. But it now seems as if the new rating system is being interpreted like everyone should excel in everything

.

Freddy: Proponents of recognition and rewards assure us that we do not have to become five-legged sheep, as it were. And they say the system will not require everyone to excel in everything. At the same time, four of the six assessment pillars are not research and education. This creates a sense of uncertainty: what is it that I should do exactly?

Doesn’t every change involve a sense of uncertainty?

Bert: I think it does. But whereas we first (unintentionally) went too far in terms of checking off lists and numbers – which is the reason why we now feel such an urge to change – we must be careful not to get carried away in the other direction now. It’s certainly not good to reduce people to a number. Numbers need to be seen in the proper context. At the same time, you shouldn’t replace the Hirsch index with the number of followers and likes of your twitter account, so to speak.

Eline: I am concerned about NWO no longer permitting to name quantitative, measurable indicators at all. This makes it seem like it is more about whether people are good at selling themselves. It would be good to define what suitable, measurable output indicators are for different disciplines. Additional activities – such as outreach – are extremely valuable in all kinds of ways, but being a good researcher forms the foundation of everything else.

“Qualitative and quantitative indicators should co-exist“

Bert Weckhuysen

Bert: Qualitative and quantitative indicators should co-exist. Both have their merits. However, and this is where I agree with Eline: the two most important tasks of a university, namely 1. educating people and 2. pushing the boundaries of our knowledge and thinking (research), should form the basis. Among other things, valorisation and outreach are derived from this.

And with regard to team science, it should not be a goal in itself. I think that practically all research benefits from multiple partners and types of people, but you can’t simply say that a project with fifteen partners is stronger than a project with four partners. Both large and small teams can have advantages and disadvantages. So, team science and team spirit are extremely valuable, but aren’t the holy grail either.

The Journal Impact Factor is not a good indicator of individual quality, say the young academics in their response on ScienceGuide. Would you agree?

Freddy: You can certainly discuss the score, but if you consistently manage to get articles published in a journal with a high impact factor it means that reviewers have repetitively assessed your articles according to very stringent criteria and found them good enough. For me, that certainly means something.

Eline: It does indeed mean something on average, but not individually, I think. How much a paper is cited is a better measure of its impact than the average citations of the journal in which it is published. And yes, the selection procedure for a journal such as Nature might be more stringent but the criteria are also biased.

An article might be rejected if I submit it, for example, whereas the same article might be accepted if a famous scientist submits it.

Bert: I think Eline is right about the pre-screening process. It is probably easier for me to get through that. But then, during the referee process, things can also be biased in the other direction because jealousy sometimes plays a role.

There is also a distinction between specialist journals and more general journals in which different disciplines are competing for the number of pages available. Papers with certain (current) topics, for example, systematically go to the general journals and end up being cited very often, even though everyone in the field agrees that the more specialist journals also offer a great deal of added value despite a slightly lower impact factor.

And then there are differences between various disciplines as well. A Hirsch index in chemistry or theoretical physics, for example, is different from a Hirsch index in the social sciences or medicine. All in all, it means that numbers like the impact factor and Hirsch index deserve to be placed in the right context. And that’s where things can sometimes go wrong. You can’t judge a person solely on the basis of a high Hirsch index, but simply dismissing it as something that doesn’t say anything about someone’s academic standing, is not right either. It’s quite challenging by the way, that despite me pointing out nuances, I am usually placed in the category ‘old’.

Freddy: I can really relate to that. When you, as a younger researcher, don’t support all the aspects of the new recognition and rewards system, you are at risk of being seen as someone who doesn’t keep up with the times and will – because of the ‘old versus young’ contrast outlined above – also end up being put in the category ‘old’.

Eline: Young scientists may also be afraid to speak up if they don’t (completely) agree with the new policy. Plenty of young researchers don’t have a permanent appointment, which makes them vulnerable.

What does the UU vision Recognition and Rewards say about TRIPLE? TRIPLE is a recognition model that distinguishes three core domains: education, research and professional performance. The latter includes tasks and roles outside education and research, like patient care or work for an advisory board. Other components of the model are: impact, leadership and team spirit. Impact – for instance scientific, educational, social or professional – is corollary of the three core domains. And leadership and team spirit form the fundament; they are conditional for the core domains to succeed. Team spirit does not imply that every activity has to be a joint one, but that academic work benefits from an open and collaborative approach and that every individual operates in the context of a broader team. The standpoint of TRIPLE is not for everyone to excel in everything.

Is the new recognition and rewards system irreversible?

Bert: I think so. And that’s good. I think it’s very important to focus on a nuanced assessment. One of the positive things about the process is that it prevents education from being overlooked. We must truly cherish people who are good at teaching.

Freddy: I think it is irreversible in the short term. But in the long term we might see a swing in the other direction. Compare it to the CITO primary school assessment test, for example. Years ago, this had to be abolished as a measuring instrument because it wasn’t good to assess children on the basis of a single series of tests. The assessment had to be based on the teacher’s reasonably subjective, qualitative judgement. The unintended result turned out to be that children from underprivileged backgrounds received less favourable recommendations on average. Consequently objective tests were deemed important after all and were reinstated. Perhaps something similar will happen in the academic world.

Eline: I think this is a wonderful example that having both would be the best solution.

Bert: “In short, there is a need to continuously evaluate the rating system so that we end up somewhere in the middle.”

Source: website Utrecht University